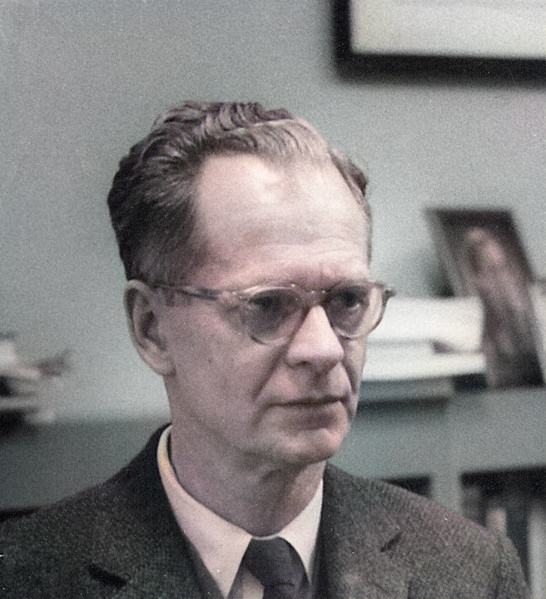

B.F. Skinner at the Harvard Psychology Department, c. 1950

On March 20, 1904, American psychologist, behaviorist, author, inventor, and social philosopher Burrhus Frederic (B. F.) Skinner was born. His pioneering work in experimental psychology promoted behaviorism, shaping behavior through positive and negative reinforcement and demonstrated operant conditioning. The “Skinner box” he used in experiments from 1930 remains famous.

“The real question is not whether machines think but whether men do. The mystery which surrounds a thinking machine already surrounds a thinking man.”

– B. F. Skinner, Contingencies of Reinforcement: A Theoretical Analysis (1969)

B. F. Skinner – Early Years

Burrhus Frederic Skinner was born March 20, 1904. Skinner grew up in a small town in Pennsylvania as the son of a lawyer. Skinner received his BA in Arts and Linguistics from Hamilton College in upstate New York in 1926. The young man wanted to become a writer badly. He wanted to become a writer, but only managed to place a dozen articles in newspapers, so he began working as an assistant in a bookstore in New York. According to the biography of his daughter Julie S. Vargas, it was only there that he became aware of the writings of Ivan Petrovich Pavlov [4] and John B. Watson and therefore enrolled in psychology at Harvard University from 1928, where he received his doctorate in 1931. For five years, Skinner continued his research at Harvard. In the next ten years, Skinner taught and researched at several universities across the United States before returning to Harvard in 1948, where he remained for the rest of his life.

Operant Conditioning

“A person who has been punished is not thereby simply less inclined to behave in a given way; at best, he learns how to avoid punishment.”

– B. F. Skinner, Beyond Freedom and Dignity (1971)

During his research, Skinner noticed that classical conditioning did not account for the behavior most of us are interested in, such as riding a bike or writing a book. His observations led him to propose a theory about how these and similar behaviors, called operants, come about. In operant conditioning, an operant is actively emitted and produces changes in the world that alter the likelihood that the behavior will occur again. Operant conditioning has two basic purposes, increasing or decreasing the probability that a specific behavior will occur in the future. They are accomplished by adding or removing one of two basic types of stimuli, positive-pleasant or negative-aversive. That means, if the probability of a behavior is increased as a consequence of the presentation of a stimulus, that stimulus is a positive reinforcer and vise versa, if the probability of a behavior is increased as a consequence of the withdrawal of a stimulus, that stimulus is a negative reinforcer.

The Skinner Box

The famous Skinner box has a bar or pedal on one wall that, when pressed (for example by rats), causes a little mechanism to release a food pellet into the cage. So when the rat is put in the cage and it (probably accidentally) presses the bar for the first time, a food pellet falls into the cage. The operant in this scenario is the behavior just prior to the reinforcer, which is the food pellet, of course. The rat is now furiously peddling away at the bar, hoarding his pile of pellets in the corner of the cage. A behavior followed by a reinforcing stimulus results in an increased probability of that behavior occurring in the future. If the rat is not given any more pellets when pressing the bar, it will stop doing so, which is called extinction of the operant behavior. Then however, if the pellet machine is turned back on, the rat will learn the behavior much more quickly than the first time. By controlling this reinforcement together with discriminative stimuli such as lights and tones, or punishments such as electric shocks, experimenters have used the operant box to study a wide variety of topics, including schedules of reinforcement, discriminative control, delayed response, punishment, and so on.

Pigeon Guided Missiles

“Education is what survives when what has been learned has been forgotten.”

– B. F. Skinner, (1964)[7]

Due to his successful work in behavioural biology, he was able to conduct independent research for five years after his doctoral examination at Harvard in 1931, but in 1936 he moved to the University of Minnesota in Minneapolis as a lecturer (and later professor) in psychology, where he no longer continued his experimental studies. It was not until 1944, when Germany was already using remote-controlled bombs against targets in England during World War II (V2 rockets that could still be guided in flight [5]), that Skinner reactivated his eagerness to experiment: he went in search of financial support for a (now grotesque) top-secret military project. Skinner trained pigeons whose pecking movements were to be used to keep a long-range missile on course; apparently he planned to add a pigeon to each missile – but radar-based guidance systems were chosen after all. Nevertheless, pigeons remained the most important model organisms for Skinner’s behavioral studies in later years; in any case, he never conducted experiments with rats again.

Walden Two

If the world is to save any part of its resources for the future, it must reduce not only consumption but the number of consumers.

— B. F. Skinner, Walden Two (1948), p. xi

In 1948 Skinner returned to Harvard as full professor of psychology and remained at Harvard until his retirement in 1974, when he wrote his novel Walden Two, also under the influence of hundreds of thousands of people returning home from the war, but it took more than a decade for it to become a widely discussed book. The novel depicts the life of a community formed by operant conditioning and is still internationally acclaimed today.

Science and Human Behavior

In 1953, Science and Human Behavior was published, in which Skinner transferred the knowledge gained from the animal model to humans. In the further course of the 1950s, Skinner developed so-called learning machines and the method of programmed learning, based on his considerations of learning theory already described in Walden Two. This method is based on breaking down the entire learning material into small subunits, the correct reproduction of which is “rewarded” by permission to take the next learning step, so that in self-study one can gradually acquire knowledge for oneself and also control the learning success oneself.

Verbal Behavior

In 1957, Skinner completed over 20 years of work on Verbal Behavior, his theory of linguistic behavior. Skinner interpreted human language as behavior that is subject to the same laws as all other behavior. Skinner himself considered Verbal Behavior to be his major work. At the same time, however, Verbal Behavior also marks the beginning of the so-called cognitive turnaround. Many psychologists in the following years and decades turned away from behaviorism in general and Skinner’s behavioral analysis in particular, and turned to cognitive psychology.

Later Years

In his later years, Skinner was very pessimistic about people’s ability to avert future threats of global proportions such as environmental degradation, resource depletion and overpopulation. In an essay, he provided a psychological explanation for the lack of effective precautionary measures in spite of existing technical and scientific knowledge. Skinner, whose major work Science and Human Behavior was published in 1953, wrote books and essays until old age, even after he was diagnosed with leukemia in 1989. Ten days before his death he gave his last lecture to the American Psychological Association. His daughter wrote: “He finished the article from which the speech came on August 18, 1990, the day he died.“

Paul Bloom, 4. Foundations: Skinner, [8]

References and Further Reading:

- [1] M. Koren, B.F. Skinner: The Man Who Taught Pigeons to Play Ping-Pong and Rats to Pull Levers, at Smithsonian magazine

- [2] Saul McLeod: Skinner – Operant Conditioning, at Simply Psychology

- [3] B. F. Skinner at Wikidata

- [4] Pavlov and the Conditional Reflex, SciHi Blog, December 27, 2014.

- [5] A4 – The First Human Built Vessel To Touch Outer Space, SciHi Blog

- [6] Works by or about B. F. Skinner at Internet Archive

- [7] B. F. Skinner, “New methods and new aims in teaching“, in New Scientist, 22(392) (21 May 1964), pp.483-4

- [8] Paul Bloom, 4. Foundations: Skinner, Introduction to Psychology (PSYC 110), Yale Courses @ youtube

- [9] Sobel, Dava (August 20, 1990). “B. F. Skinner, the Champion Of Behaviorism, Is Dead at 86”. The New York Times.

- [10] “Skinner, Burrhus Frederic”. History of Behavior Analysis

- [11] Swenson, Christa (May 1999). “Burrhus Frederick Skinner”. History of Psychology Archives.

- [12] Timeline for B. F. Skinner, via Wikidata

Pingback: Whewell’s Gazette: Year 3, Vol. #32 | Whewell's Ghost